The most advanced technology of our time is not pushing us forward. It is pulling us backward — and that might be the most interesting thing happening in computing today.

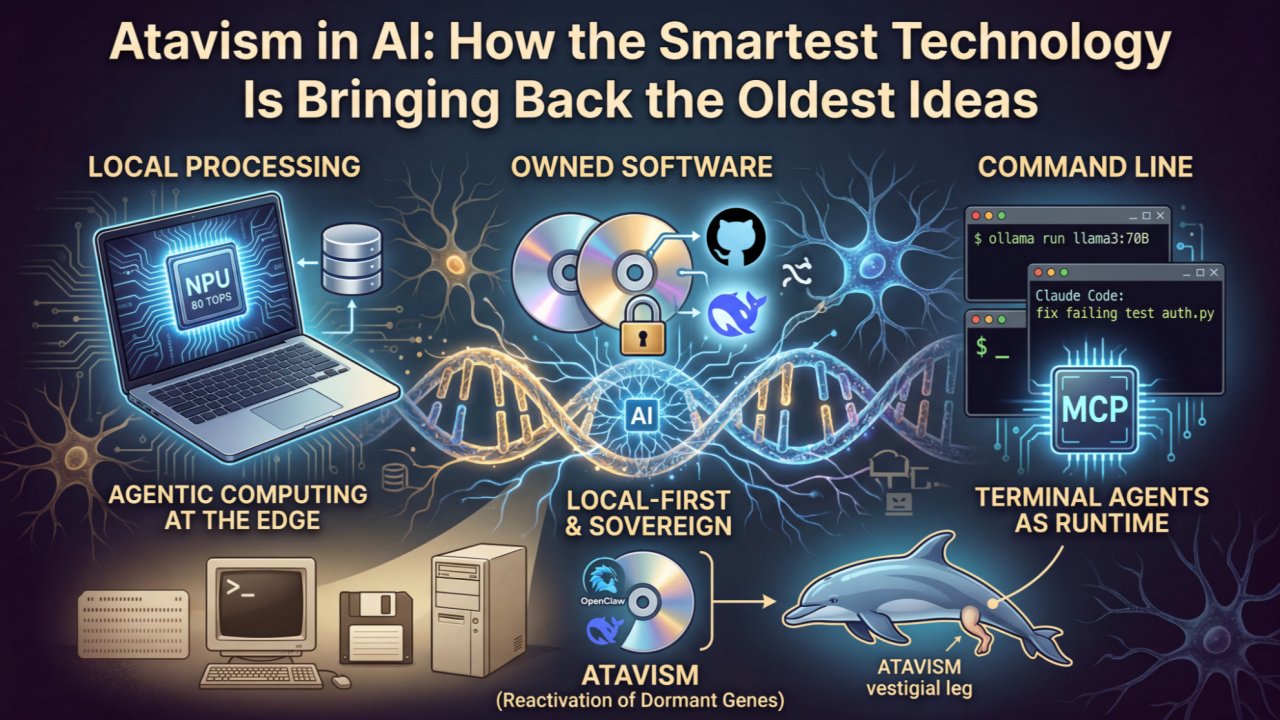

We are witnessing AI drive a mass migration away from the cloud and back to local machines. Away from SaaS subscriptions and back to software you own. Away from polished GUIs and back to the command line. Three decades of computing evolution, seemingly reversing course in under three years.

Biologists have a word for this: atavism.

What Is Atavism?

In evolutionary biology, an atavism (from Latin atavus, meaning "great-grandfather's grandfather") is the reappearance of an ancestral trait that had been lost through generations of evolution. The genes were never deleted — they went dormant, buried in DNA, waiting for the right conditions to switch back on.

Dolphins occasionally grow vestigial hind limbs — a throwback to the land-walking ancestors they left behind 50 million years ago. Hens sometimes develop teeth, a trait inherited from their dinosaur lineage. The genetic code for these features was always there. It just needed a trigger.

The same pattern is now unfolding in technology. Computing paradigms we declared obsolete — local processing, owned software, command-line interfaces — are re-emerging at scale. The genetic code of these older architectures was never erased from our technological DNA. It was dormant. And AI has reactivated it.

The Three Atavisms

1. From Cloud Back to On-Prem and On-Device (The Rise of “Edge AI”)

Ancestral trait: Local computation on personal hardware

Dormant period: ~2005–2020 (the cloud era)

Trigger: AI's unique demands for privacy, latency, and cost efficiency

For fifteen years, the industry narrative was clear: everything moves to the cloud. Storage, compute, intelligence — all centralized in hyperscaler data centers. Local processing was a relic of the beige-box PC era.

Then AI changed the economics.

The hardware shift is already here. Every major chipmaker is now engaged in an NPU arms race — embedding dedicated AI processors into consumer devices:

Qualcomm Snapdragon X2 Elite — 80 TOPS. Nearly double the previous generation. 3nm process, 22+ hours of battery life. Rumors point to 120 TOPS in the next cycle.

AMD Ryzen AI Max 300 — 50 TOPS NPU plus a 40-unit RDNA 3.5 GPU. Enough to run Llama 3 70B entirely on a laptop.

Intel Panther Lake — 50 TOPS NPU, 180 total platform TOPS with Xe3 GPU.

These are not incremental improvements. The goal across all three vendors: full-day agentic computing — an AI assistant running continuously in the background for 15+ hours on a single charge.

Apple Intelligence, announced June 2024 and launched that October, runs a ~3 billion parameter model entirely on-device using the Neural Engine. Writing tools, image editing, contextual Siri — all processed locally. Data never leaves the device, never stored by Apple. When local capacity is exceeded, requests are routed to Private Cloud Compute, where data is processed ephemerally and never retained. In 2025, Apple opened the Foundation Models framework, letting any developer tap the on-device model — inference is free and works offline.

Google's Gemini Nano made Android the first mobile operating system with a built-in on-device foundation model. Pixel Screenshots performs local image analysis. Recorder summarizes meetings without an internet connection. Phone call screening runs entirely on the device. By August 2025, Google opened the ML Kit GenAI APIs to third-party developers.

Microsoft's Copilot+ PCs, announced May 2024, introduced a new system requirement: a minimum 40 TOPS NPU alongside CPU and GPU. The Recall feature builds an entirely on-device semantic index of everything you see and do on your computer — searchable through natural language, processed locally, stored locally.

The software layer followed the hardware. When Georgi Gerganov released llama.cpp on March 10, 2023 — a pure C/C++ implementation of LLaMA inference with zero dependencies — his stated goal was modest: "run the model using 4-bit quantization on a MacBook." It now has over 91,000 GitHub stars and enabled an entire ecosystem of local AI. Ollama grew 180% year-over-year and surpassed 100,000 GitHub stars, becoming the third fastest-growing project by contributors on all of GitHub in 2024. LM Studio and Jan.ai brought local LLMs to non-technical users through intuitive desktop interfaces.

Then came DeepSeek R1 on January 20, 2025. Released as fully open-source with open weights, built at a fraction of the cost of comparable models, with six smaller distilled versions designed to run on local devices. It shot to #1 on the Apple App Store within days and triggered a stock market sell-off on January 27 as investors fundamentally reassessed how much cloud AI infrastructure the world actually needs.

The market has spoken. Edge computing was a $33.9 billion market in 2024. It is projected to reach $702.8 billion by 2033 — a 40% annual growth rate. Gartner predicts 75% of enterprise data will be processed at the edge by 2025, up from 10% in 2018. By 2026, over 42% of developers will be running LLMs entirely on local machines.

The cloud is not dying. But the assumption that all intelligence must live there is already dead.

2. From SaaS Back to Software You Own (The "SaaSpocalypse")

Ancestral trait: Software as a purchased, locally installed product

Dormant period: ~2010–2024 (the SaaS era)

Trigger: AI agents that replicate SaaS functionality without per-seat subscriptions

For over a decade, the industry orthodoxy was that software had evolved beyond the era of ownership. You no longer bought software — you rented it. Per seat, per month, forever. The vendor maintained it, updated it, and controlled it. You paid for access and hoped they did not change the terms.

AI is dismantling that model from two directions.

From the bottom up, open-source local alternatives are matching or exceeding their SaaS equivalents:

- ChatGPT / Claude API → Ollama + Llama 3.3, DeepSeek R1 — GPT-4-class reasoning on your own hardware

- ChatGPT UI → Jan.ai, Open WebUI — 100% offline ChatGPT replacements

- Midjourney / DALL-E → Stable Diffusion, FLUX — full image generation on consumer GPUs

- Cloud speech-to-text → Whisper.cpp — 2x faster than cloud, 99 languages, completely offline

- Notion → AppFlowy — open-source, AI-integrated, local-first

- OpenAI API → LocalAI — a drop-in replacement running on consumer hardware

From the top down, the local-first software movement — first articulated by Martin Kleppmann and colleagues at Ink & Switch in their 2019 manifesto — has become an engineering philosophy. Its seven ideals: fast performance, multi-device sync, offline capability, collaboration, longevity, privacy, and user control. The first Local-First Conference was held in 2024, and the community has grown rapidly since.

The breakout case study is OpenClaw. Originally published in November 2025 by Austrian developer Peter Steinberger as "Clawdbot," it is an open-source autonomous AI agent (MIT License) that runs locally. The gateway, tools, and memory live on your machine — not in a vendor's cloud. Conversations are stored as plain Markdown and YAML files: inspectable, editable, Git-backable. In late January 2026, it gained 100,000+ GitHub stars in under a week — one of the fastest-growing repositories in GitHub history. OpenAI subsequently acquired OpenClaw, signaling that even the largest cloud AI company recognized the gravitational pull of local-first.

The financial consequences have been severe. Between January 15 and February 14, 2026, approximately $2 trillion in market capitalization evaporated from the software sector — a period now referred to as the "SaaSpocalypse." Software price-to-sales ratios compressed from 9x to 6x, levels not seen since the mid-2010s. Forward earnings multiples plummeted from 39x to 21x. Hardest hit: Atlassian (–35%), Salesforce (–28%) — companies whose core workflows are exactly what AI agents automate. CIOs began signaling budget exhaustion for traditional per-seat software.

The SaaS model is not disappearing entirely. But the assumption that every workflow must be a monthly subscription to a vendor-hosted service is being challenged in a way it has not been since before the cloud era.

3. From GUI Back to the Command Line (The “AI Renaissance”)

Ancestral trait: Text-based interaction via terminal

Dormant period: ~1984–2024 (the GUI era)

Trigger: AI agents that work better through composable text commands than through graphical interfaces

Of the three atavisms, this one may be the most counterintuitive. The graphical user interface was supposed to be the permanent evolutionary endpoint of human-computer interaction. The Macintosh in 1984, the Web in 1995, the iPhone in 2007 — each milestone moved us further from text and closer to visual, touch-based, point-and-click interaction.

Now the most sophisticated AI tools are shipping as terminal applications.

Claude Code (Anthropic, February 2025): an agentic coding tool that lives entirely in the terminal. It reads your codebase, executes multi-step tasks, manages git workflows, and writes code — all through natural language commands typed into a terminal. By January 2026, a Faros AI survey found 59% of professional developers were using it.

Gemini CLI (Google, June 2025): open-sourced under Apache 2.0, providing terminal access to Gemini models with a one-million-token context window. Google — a company that built its empire on web interfaces — shipped an AI tool for the command line. Free tier: 60 requests per minute, 1,000 per day.

GitHub Copilot CLI (generally available February 25, 2026): evolved from a simple terminal assistant into a "full agentic development environment." It plans, builds, reviews, and remembers across sessions. GitHub deprecated its older GUI-adjacent gh copilot extension in favor of the terminal-native version.

Aider, Warp, and a growing constellation of terminal-first AI tools have made "terminal agents the fastest-growing category of AI developer tools" in 2025.

Why is the command line winning? The answer lies in what AI agents actually need:

- Composability. Unix pipes and shell commands are infinitely composable. GUIs are not. An AI agent that can chain grep | awk | sed | git with natural language glue is more powerful than one constrained by button clicks.

- Autonomy. CLI agents can take multi-step autonomous actions — reading files, running tests, committing code, iterating on errors — without requiring a human to click through dialog boxes at every step.

- Speed. Text in, text out. No rendering overhead, no layout engines, no DOM manipulation. The terminal is the lowest-latency interface between a human and a machine.

- Transparency. Every action is visible as text. Every command is inspectable, auditable, and reproducible. GUIs hide complexity behind abstraction layers; the terminal exposes it.

As one engineer put it: "Your terminal is an AI runtime now."

The Pattern Behind the Patterns

These three reversals are not coincidences. They share a common structure:

- Compute: Cloud-centralized → On-device / edge

- Software: SaaS (rented, vendor-hosted) → Local-first (owned, self-hosted)

- Interface: GUI (visual, browser-based) → CLI (text, terminal-based)

- Control: Vendor-controlled → User-controlled

- Data: Stored remotely → Stored locally

- Cost model: Recurring subscription → One-time or open-source

The direction is consistent across every dimension: from centralized and controlled toward local and sovereign.

And they are all driven by the same force. AI's unique combination of privacy sensitivity, latency requirements, computational cost, and need for composable autonomy has created environmental pressure that reactivated dormant computing traits across the entire stack — simultaneously.

Is This Really Atavism? Or Something Else?

The strongest counter-argument is that this is not true regression but a spiral — each return to an older paradigm comes back at a higher level of sophistication:

- Local processing in 2025 is not local processing in 1995. Modern on-device models leverage quantization, neural processing units, and knowledge distillation — techniques that did not exist in the PC era. A laptop running Llama 3 70B through an AMD Ryzen AI Max 300 is categorically different from a Pentium running WordPerfect.

- The CLI of 2025 is not the CLI of 1985. Today's terminal tools understand natural language, manage multi-step autonomous workflows, integrate with modern toolchains through protocols like MCP, and can reason about code semantics. Typing "fix the failing test in auth.py" into Claude Code is not the same as typing gcc -o program main.c.

- Owning software in 2025 is not owning software in 2005. Local-first applications support real-time collaboration, seamless sync across devices, and optional cloud augmentation. Jan.ai runs offline but can also route to cloud models when local capacity is insufficient. This is not the old world of isolated desktop applications.

The biological analogy actually accounts for this. Atavisms are not perfect replicas of ancestral traits. A dolphin's vestigial hind limb is not the fully functional leg of its terrestrial ancestor — it is a partial reactivation, shaped by the modern organism's body plan. The trait returns, but in a context that has evolved since it was last expressed.

What makes the atavism metaphor especially apt is the element of surprise. These paradigms were widely considered obsolete. The industry consensus was that local compute, owned software, and command-line interfaces were evolutionary dead ends — quaint relics left behind by the march toward cloud, SaaS, and GUI. Their large-scale return was not predicted by the mainstream, just as a dolphin growing hind legs is not predicted by its current phenotype.

What Is Genuinely New

Three things distinguish this moment from a simple cyclical swing:

1. AI as the catalyst. Previous pendulum swings between centralized and distributed computing were driven by economics and capability — mainframes were expensive, PCs were cheap, bandwidth got fast, cloud got cheap. This shift is driven by a fundamentally new kind of workload that creates unique pressures around privacy, latency, cost, and autonomy simultaneously.

2. The convergence. All three atavisms are happening at the same time, driven by the same force. Cloud-to-local, SaaS-to-owned, and GUI-to-CLI are not independent trends — they are three expressions of one underlying pressure. That simultaneity is unprecedented.

3. The speed. Previous computing paradigm shifts took 15–20 years to unfold. This one is compressing into 2–3 years. From llama.cpp (March 2023) to the SaaSpocalypse (February 2026) — the entire arc from possibility to market disruption took under three years.

4. The kill zone has shifted from startups to incumbents. This is the most consequential difference, and it is worth pausing on.

In every previous wave of tech disruption, the pattern was predictable: a new platform emerges, it kills a generation of startups that failed to adapt, and the incumbents survive by acquiring or copying. When a new LLM dropped in 2023 or 2024, the casualties were AI wrappers and thin-moat startups. The Googles and IBMs of the world watched from the sidelines, unscathed.

That pattern is over.

On February 23, 2026, IBM shares crashed 13.2% in a single day — the company's worst session since October 2000 — after Anthropic published a blog post. Not a product launch. Not a competitive product. A blog post explaining that Claude Code could now automate the exploration and analysis of COBOL codebases: mapping dependencies across thousands of lines of legacy code, documenting workflows, and identifying modernization risks that "would take human analysts months to surface." IBM's stock is now down over 25% in a month.

The threat is existential because of the numbers underneath. An estimated 95% of ATM transactions in the United States still run on COBOL. Hundreds of billions of lines of COBOL execute in production every day, powering banking systems, airlines, and government infrastructure. Legacy mainframe modernization and consulting is a cornerstone of IBM's services revenue. The reason these systems survived this long was not because COBOL was good — it was because understanding COBOL was so expensive that rewriting it cost more than maintaining it. AI flips that equation. When an AI agent can read, document, and refactor a 67-year-old codebase in hours instead of months, the moat around legacy consulting evaporates. Morgan Stanley can argue that enterprises may not want to leave the mainframe, but the calculus changes permanently when the cost barrier to leaving drops by orders of magnitude.

This is not a business cycle. IBM cannot wait for the pendulum to swing back. There is no version of the future where AI gets worse at reading COBOL.

This is the shift that separates atavism from a cycle. In previous technology waves, incumbents were the survivors. In this one, they are the targets. Anthropic does not need to build a mainframe consulting practice to threaten IBM. It just needs to make an announcement — or ship a demo — and hundreds of billions of dollars in market capitalization reprices overnight.

When every product launch, every blog post, every viral demo from an AI company can reprice the market's expectations of a century-old incumbent, we are no longer watching a pendulum. We are watching a one-way door.

The Executive Implication

If you are leading a technology organization, the atavism in AI demands three strategic recalibrations:

Recalibrate your infrastructure assumptions. The question is no longer "cloud or on-prem" — it is "which intelligence belongs where." Sensitive data processing, low-latency inference, and always-available AI features increasingly belong on-device. Scale-intensive training and burst workloads still belong in the cloud. The hybrid architecture is not a compromise. It is the target state. Early research shows hybrid edge-cloud architectures achieve energy savings of 75% and cost reductions exceeding 80% versus pure cloud.

Recalibrate your vendor strategy. The SaaSpocalypse is a signal, not a blip. If your AI workflows depend entirely on vendor-hosted SaaS with per-seat pricing, model the scenario where an open-source agent running on local hardware can replicate 80% of that functionality at a fraction of the cost. That scenario is not hypothetical — 78% of companies expect to integrate AI workflows within two years, and a growing proportion are choosing self-hosted models.

Recalibrate your interface investments. The developers building your AI-powered future overwhelmingly prefer terminal-based tools for agentic workflows. If your platform strategy assumes everything flows through a web browser or a mobile app, you may be optimizing for the wrong interface paradigm.

Conclusion: The Code Was Always There

Computing, like biology, does not evolve in a straight line. It evolves through variation, selection, dormancy, and reactivation. The paradigms we thought we had outgrown — local compute, owned software, the command line — were never deleted from our technological genome. They were suppressed by environmental conditions that favored their alternatives.

AI has changed the environment. And the old code is switching back on.

The most forward-looking strategy in technology today is, paradoxically, one that takes these ancestral traits seriously. Not as nostalgia. Not as regression. But as the reactivation of proven architectures that are, once again, the best fit for the pressures of the moment.

The smartest technology is bringing back the oldest ideas. That is not a contradiction. That is atavism — and it is the most important pattern in AI right now.

Ready to scale your AI securely? Let's talk.